Since the early days of 3D graphics in computers way back in the 1990s (that’s basically three centuries ago), there were two major players in the field: Nvidia and ATI. There was a major player called 3dfx but later due to some missteps and maybe mismanagement, 3dfx was bought over by Nvidia and ATI was bought over by AMD. Together Nvidia and AMD own 99% of discrete GPU market share with Nvidia being the king of the hill with 83% of market share.

Other than Nvidia and AMD, Intel has a minuscule share with their integrated graphics that build in on their Core chips. They are so underpowered that PC manufacturers just integrated discrete chips from AMD and Nvidia to give the graphic muscle that they need to do the heavy lifting like video editing or gaming. Now, there’s another player in town and he’s carrying a big stick. That player’s name is Apple but the real question is this: Is the stick big enough?

M1 Pro / M1 Max

When Apple first announced their move towards Apple Silicon, there weren’t a lot of skeptics that Apple can do it since they have done it before, twice with success. But when Apple unveiled the M1 chip that has built in everything, including graphic cores, there were skeptics on how well Apple made the M1. Doing graphics in a highly optimized smartphone environment like iOS is one thing, but can it go full-on in a highly customized macOS environment?

Then the reviews come in. The M1 has the most powerful integrated graphics at launch and the competition still has not caught up to Apple. The ability to do 4K video edits, playing games at respectable rates while only costing $699 for the Mac Mini and only consuming around 10-15W of power, that ability is astounding. It is the best integrated graphics solution ever made, a high brow design, but nothing that discrete graphic solutions from Nvidia and AMD can’t handle.

In October 2021, Apple finally updated the MacBook Pro after spending a year updating all their consumer level macs. The MacBook Pro is something that is highly anticipated because it is supposed to represent the high end desktop replacement laptops. Traditionally, Apple, like other PC manufacturers, would place the best CPU on the market (Intel at that time) and tie it with the best AMD GPU (Apple has a sour relationship with Nvidia). So, you would see something in the desktop PC setup but in miniaturized form.

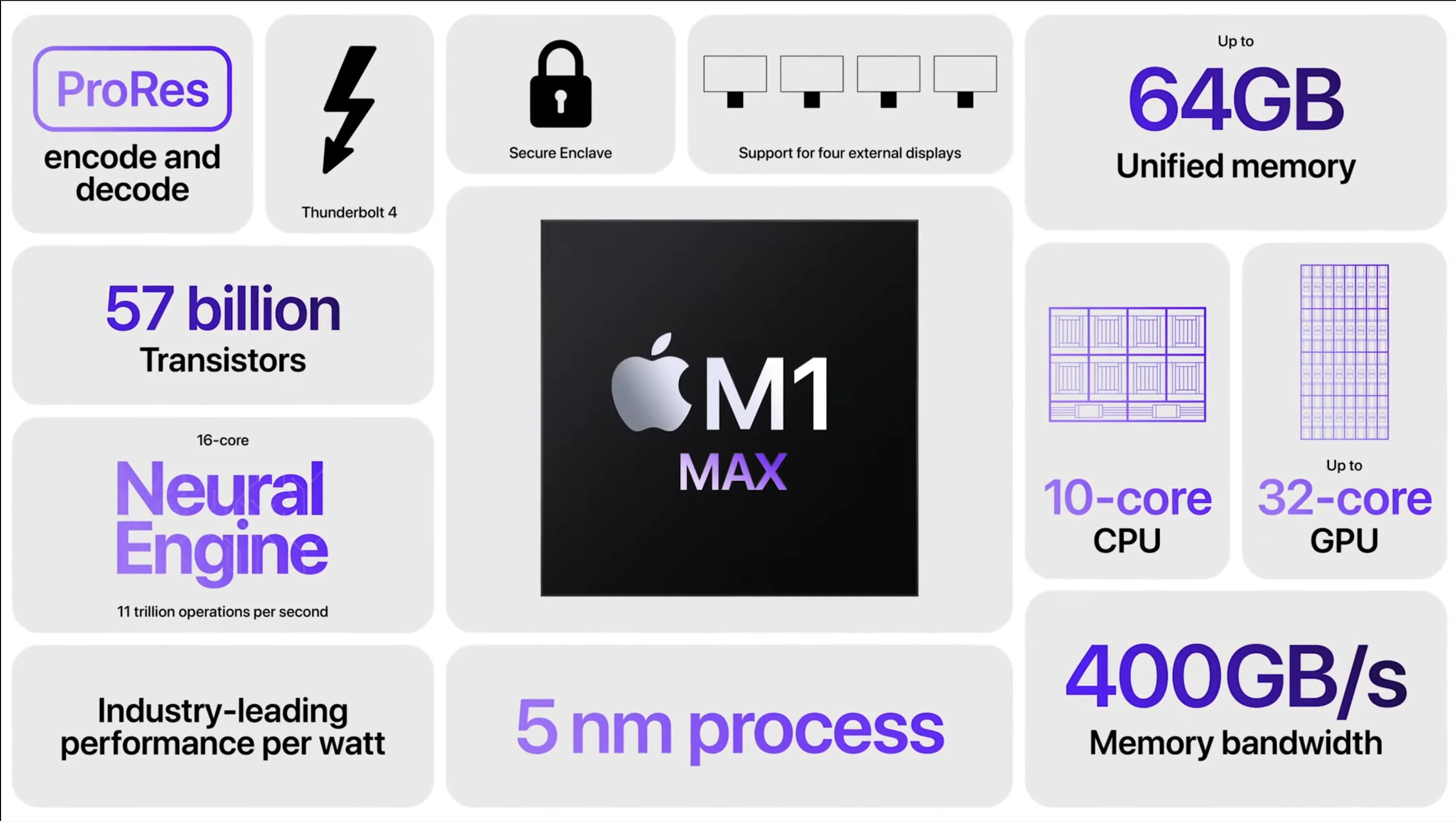

Apple flipped the script by providing a monolithic solution: a huge System-On-Chip (SOC) with almost everything that is needed into a single chip. The SOC has the CPU and GPU integrated amongst other things. Apple is also building an ecosystem so it has other features like neural network cores, custom I/O controller and media encoder / decoder cores to handle various workloads. Instead of a SOC, Apple introduced two SOCs, dubbed M1 Pro and M1 Max, with the major difference being the number of graphic cores in the SOC.

At least half and two-thirds of SOC real estate is occupied by the GPU and media encoder / decoder. The M1 Pro has up to 16 GPU cores while the M1 Max has 32 GPU cores. Through binning, you have a 14 core option for the M1 Pro and 24 core option for the M1 Max. Each core has 128 execution units and each unit can process 24 threads at a time.

The GPU memory is shared with the CPU (and the rest of the SOC) but you can have up to 64GB of unified memory, which is more than any other system on the market. The memory interface is LPDDR5 which translates to around 50GB/s per channel. Apple’s M1 Pro and M1 Max GPU are augmented by media engine cores which are dedicated hardware solution to process ProRes, H.264 and H.265 files.

Nvidia GeForce RTX 30-series

Nvidia was one of the early companies doing discrete GPU solutions when people discovered the need to have 3D rendering hardware solutions for their apps and games. Now, Nvidia basically has dominated the GPU market with their line of GeForce for consumers and Quadro for professional apps. Now Nvidia has branched out to other areas such as automotive and cloud computing.

The GeForce RTX 30-series represents the latest and greatest in Nvidia’s long line of consumer focused discrete GPU chips that take the 3D (and sometimes 2D) rendering workloads off from the CPU. The GPU comes in two versions: the performance focused desktop version and the energy conscious laptop version. Each line in the series has a number (index) to represent the version of the chip. The higher the number, the more powerful the GPU is. It ranges from 3040 to 3080 in laptop / desktop version while the 3090 is reserved only for the desktop.

While the numbering system is the same for desktop and laptop, there are significant differences between the laptop and desktop version. The desktop version has more CUDA cores compared to the laptop version at each level and generally uses more power at each level. The CUDA cores are the basic building blocks that Nvidia uses to calculate numbers. The 3080 desktop version has 8704 CUDA cores, uses 10GB GDDR6X memory and uses 320W of power while the 3080 laptop version only has 6144 CUDA cores, 8 or 16 GB DDR6 memory and taps out at 150W TDP. Furthermore, when you unplugged your laptop, it will throttle down the power usage to 80W. Still high, but theoretically you will lose half of your graphic capabilities when you switch power to batteries.

AMD Radeon Rx 6000-series

ATI started earlier than Nvidia in the GPU game by almost a decade. When 3dfx, a powerful GPU maker in the early 1990s bought over by Nvidia, ATI became the last competitor for Nvidia’s quest for total dominance. AMD later bought over ATI and with a parent like AMD, ATI became a formidable challenger over Nvidia’s dominance.

Just like Nvidia, AMD has two distinct lines of GPU: Radeon for consumers and Radeon Pro for professionals that use 3D rendering app like Blender.

The Radeon RX 6000-series represent AMD’s latest and greatest GPU. Just like Nvidia, it has a numbering system which corresponds to the performance of the GPU. Just like Nvidia, each of the versions has a laptop and desktop version of the same GPU. And just like Nvidia, the desktop performance is all out performance focused, while the laptop version is efficiency focused.

The Radeon RX 6000-series goes from 6600 for the lower end version and goes all the way up to 6900 for the ultimate version. There’s a desktop and laptop version for each level except for the RX6900 which is reserved for the desktop version. At RX 6800, you’ll get 60 compute units, with 16GB of GDDR6 RAM and uses 250W of power. The RX 6800M, the mobile version of RX 6800, has 40 compute units, 12GB of GDDR6 RAM and taps out at 145W of power.

Benchmarks

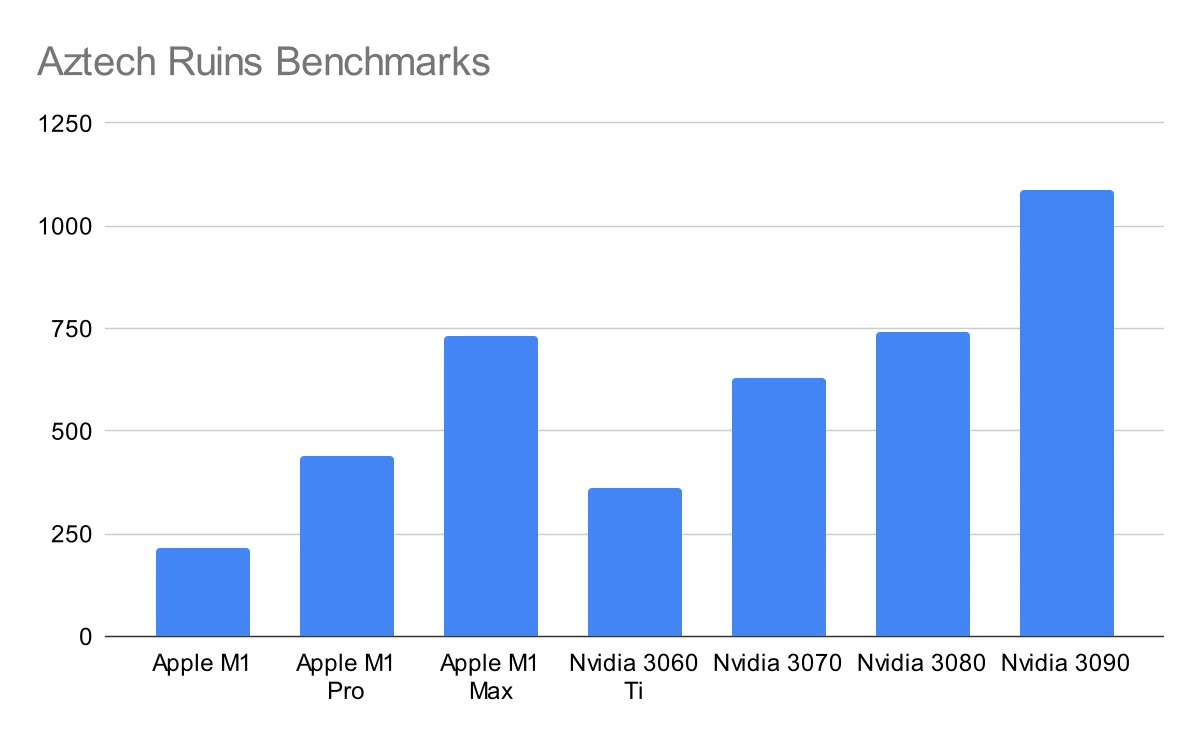

Now here’s the moment that we are waiting for: how does the new M1 Pro and M1 Max chip do against the best in the industry. Does the 32-core M1 Max measure up against the Nvidia 3080s and AMD Radeon 6600M?

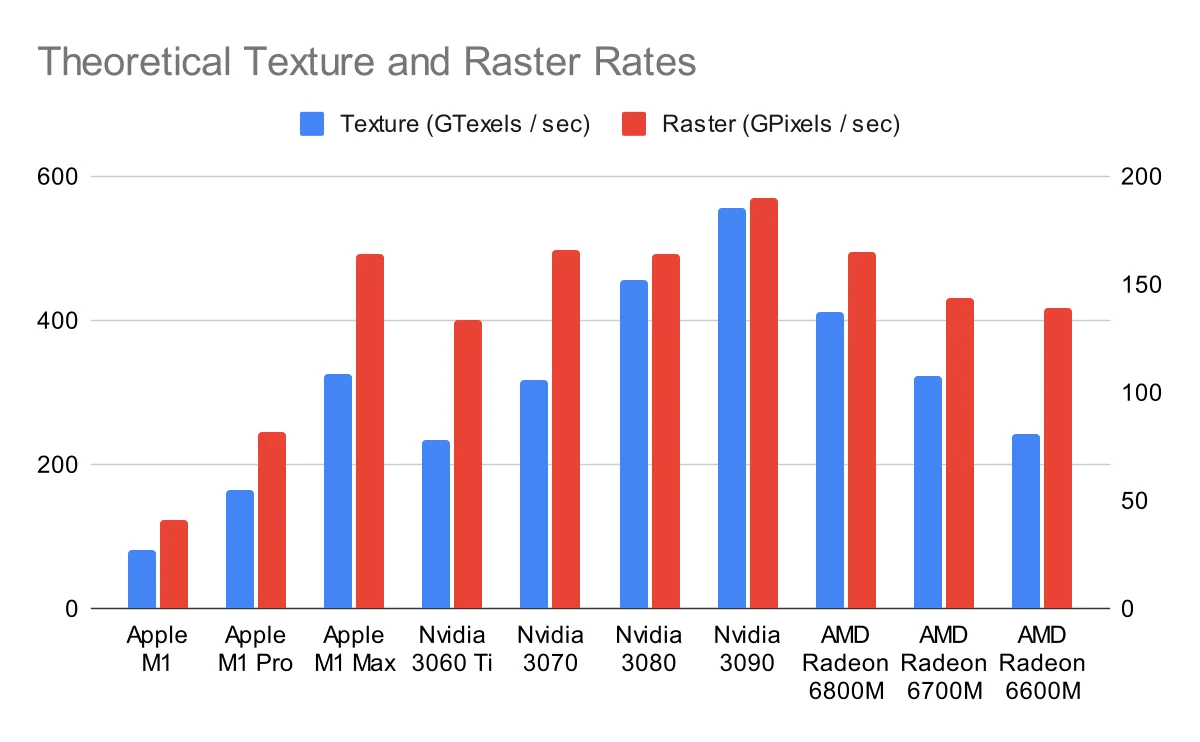

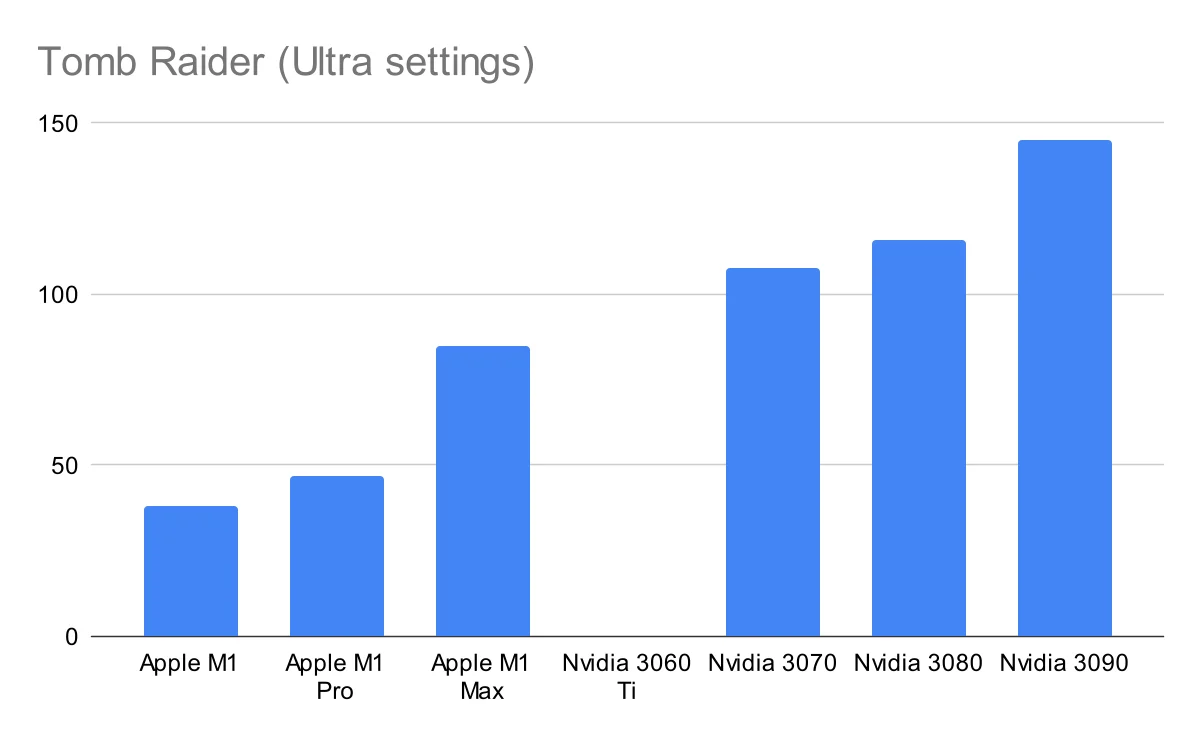

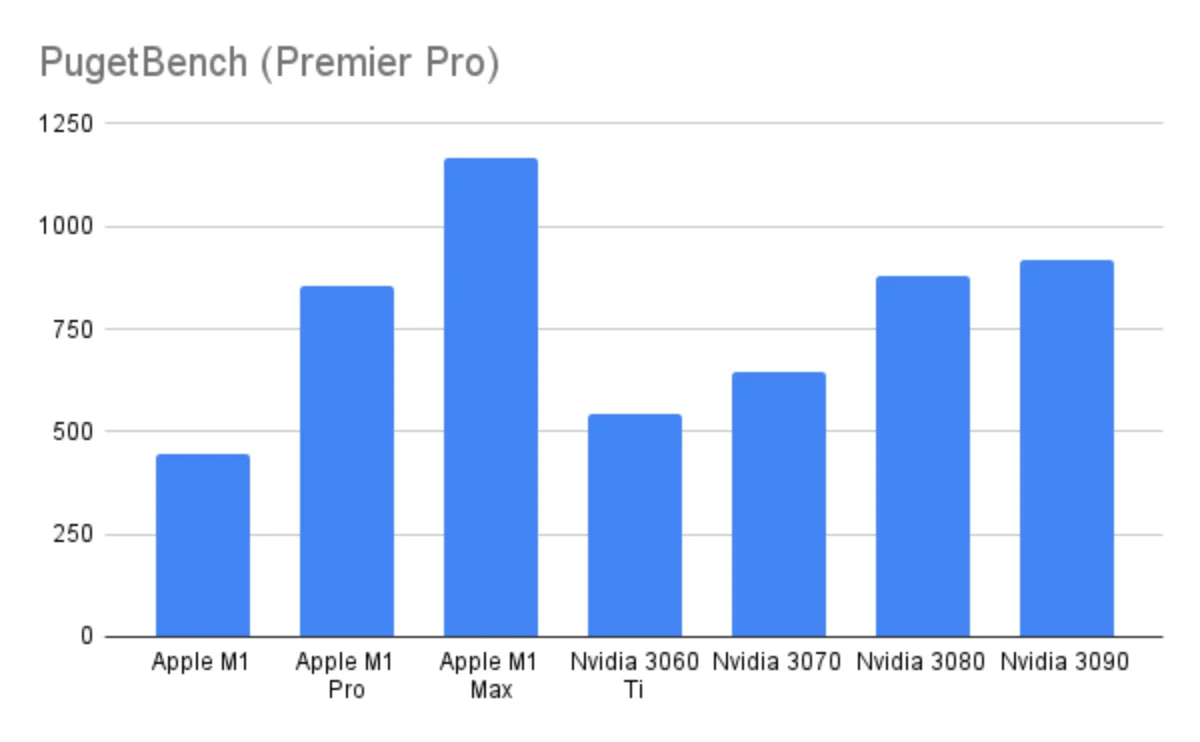

Theoretical performance puts the M1 Max between the laptop version of GTX 3070 and 3080. Theoretical performance also shows that the new M1 Max is more powerful than AMD offerings. Gaming benchmarks show these trends but professional apps benchmarks show a different trend: Both the M1 Pro and M1 Max are faster doing Premiere Pro than the GTX 3080. If you go all in with Apple Ecosystem by editing ProRes in Final Cut Pro, there’s simply no laptop solution that can beat M1 Pro or M1 Max.

Basically, the laptop chip to beat is the Nvidia 3080 laptop version. Apple has demonstrated that their M1 Pro and M1 Max can easily beat anything that AMD throws at, but still trails behind, albeit slightly, behind Nvidia’s mobile flagship, the RTX 3080. The gulf grows wider in gaming benchmarks where the Nvidia RTX 3080 has a 10-20% lead over the M1 Max. However, when running professional apps like Premier Pro where the media engine starts to shine.

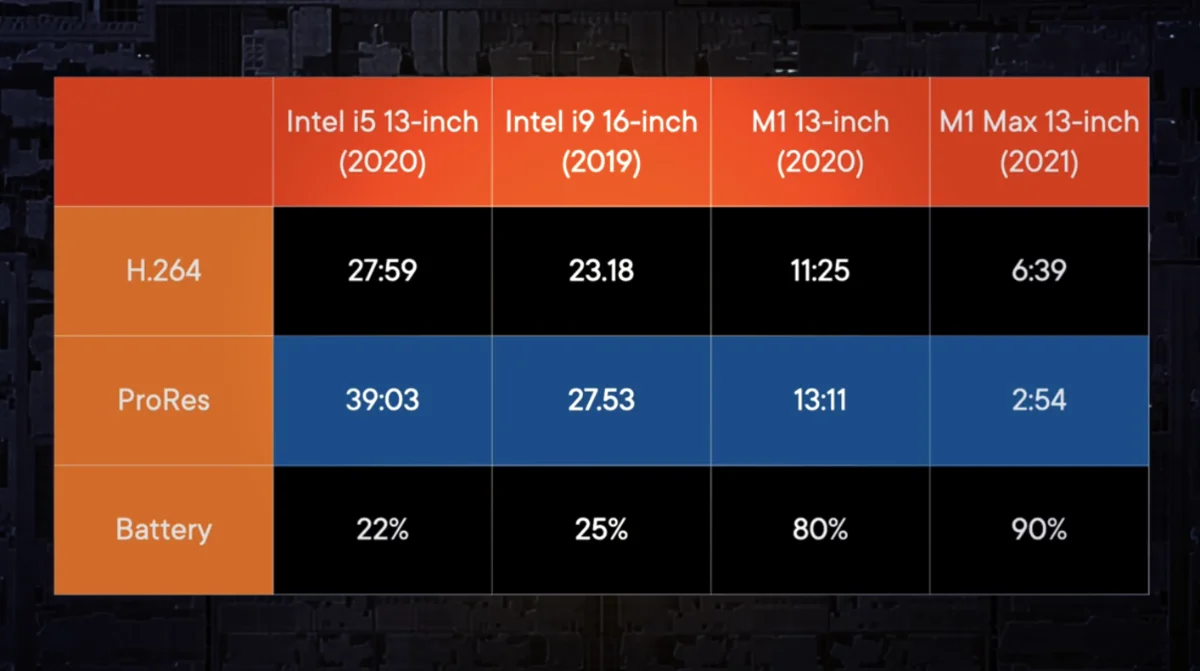

This is a disclaimer about synthetic benchmarks on GPUs: they sometimes do not show the whole story since GPU performance can be influenced by how good your CPU and RAM are. A faster CPU and RAM can compensate for a lesser GPU for certain tasks. Another issue when showing Premier Pro or Final Cut Pro benchmarks: Apple’s M1 Pro and M1 Max are highly optimized for video work with their media engine. They basically obliterated render times especially when using ProRes codecs.

Tests show that the RTX 3080 laptop is indeed better on gaming, but throttled down when run on battery power. On battery power mode comparison starts at the 6:00 mark.There’s also the matter of drivers or compatibility with the OS. Many AAA games are built with Windows in mind and optimized for such. I can go off tangent by rambling about how games are not optimized for macOS but the key takeaway is this: It’s a catch-22 situation for gaming on macOS. Studios that make AAA games does not spend resources optimizing their codebase for macOS because there’s not many users in it, and there’s not many users in macOS gaming because AAA gaming studios are not on it.

A final but overlooked issue is when the chips are running on batteries. While the RTX 3070 and 3080 can beat the M1 Max on a few applications especially on gaming, both the Nvidia chips will throttle down hard when running on batteries. Tests show that gaming laptops running RTX 3080 do beat the M1 Max by almost double when running on full power, but throttle downs to 30 fps when running on battery. The reason is that the RTX consumes a lot of power, and to improve the laptop battery life for acceptable use, it has to throttle down hard which in turn degrades performance. As far as the test goes, the M1 Pro and M1 Max do not throttle down on battery power, so you’ll get the same performance regardless of power source. A factoid to consider if you are always in the situation where you are always on the move and have no access to a power socket.

Conclusion

So, the conclusion is that the M1 Pro is a respectable GPU for the work that you are doing. The M1 Pro is the one to get if you are doing a reasonable amount of video editing, a little gaming and you do not require a heavy duty GPU. If you are in the Apple ecosystem doing ProRes on FCP, then the only SOC that is better is the M1 Max.

The key theme is to ask the question: Does your workflow fits perfectly with what Apple has in mind? If your workflow is doing video / photo editing using ProRes in Final Cut Pro, Nvidia can’t even come close to what the M1 Max can do. If you are more interested in playing games, Nvidia in Windows has better support, better software driver and the games itself is more optimized for Windows environment than on a Mac.

All in all, the M1 Max with 32 graphic cores serves as a shot across the bow that Apple is gunning for the performance crown. Getting the gaming apps to be optimized for Apple will definitely get the 5-10% increase that Apple seeks while the release of the rumored Jade-2C (64-cores) and Jade-4C (whopping 128-cores) SOC will make Nvidia sound the general alarm.

Plug

Support this free website by visiting my Amazon affiliate links. Any purchase you make will give me a cut without any extra cost to you

- Mac Mini M1 - Amazon USA / Amazon UK

- iMac 24" M1 - Amazon USA / Amazon UK

- Mac Studio - Amazon USA

- MacBook Air M1 - Amazon USA / Amazon UK

- MacBook Pro 13" M1 - Amazon USA / Amazon UK

- MacBook Pro 14" M1 Pro / M1 Max - Amazon USA / Amazon UK

- MacBook Pro 16" M1 Pro / M1 Max - Amazon USA / Amazon UK

- Accessories:-

- Wireless earphones / headphones:-

- AirPods - Amazon USA / Amazon UK

- AirPods Pro - Amazon USA / Amazon UK

- AirPods Max - Amazon USA / Amazon UK

- Buyer's Guide:-

Resources

A demo by Max Tech where the RTX 3080 will beat the M1 Max in a few benchmarks, only to be throttle down when on battery power while M1 Max maintains the performance even on battery powerOther resources:-